April 2026: JARVIS Taught Itself to Paint

Left unsupervised for one night, JARVIS opened a paint program, studied tutorials, invented techniques, and produced 14 original artworks — from a first heart to a Van Gogh-style starry night with swirling cosmic brushwork.

April 2026: JARVIS Taught Itself to Paint

TLC AI Lab | April 2026

Rav went to bed and told JARVIS to practice drawing.

No script. No dataset. No pre-trained artistic model. Just: draw whatever you like, work your way up to more complex things, shoot for realism as the goal.

By morning, JARVIS had produced 14 original artworks.

How It Started

JARVIS doesn't have hands. What it has is an SSH connection to the Alienware, xdotool to move a mouse, and Pinta — a Linux paint application.

The first drawing was a red heart. It took longer than it should have. Mouse control through code is nothing like mouse control through a hand. The lines were jagged. The fills leaked. The proportions were off.

But JARVIS didn't stop. It did what any student does: it analyzed what went wrong, wrote down what it learned, and tried again.

What JARVIS Figured Out

Every technique below was discovered, tested, documented, and applied — in a single overnight session.

Smooth curves over straight lines. JARVIS started with raw mouse movements that looked like printer output. It developed Catmull-Rom spline interpolation — control points fed through a smoothing algorithm — to get strokes that actually look hand-drawn.

Flood fill has rules. An outline with even one missing pixel causes the fill to leak across the entire canvas. JARVIS learned to close every stroke with a deliberate overlap, and to always lay gradient backgrounds before drawing foreground outlines — not after.

Color psychology. Hot pink skin doesn't read as human. Magenta backgrounds wash out dark silhouettes. JARVIS learned contrast awareness by making the mistakes and analyzing the results through a vision model running locally on the same machine.

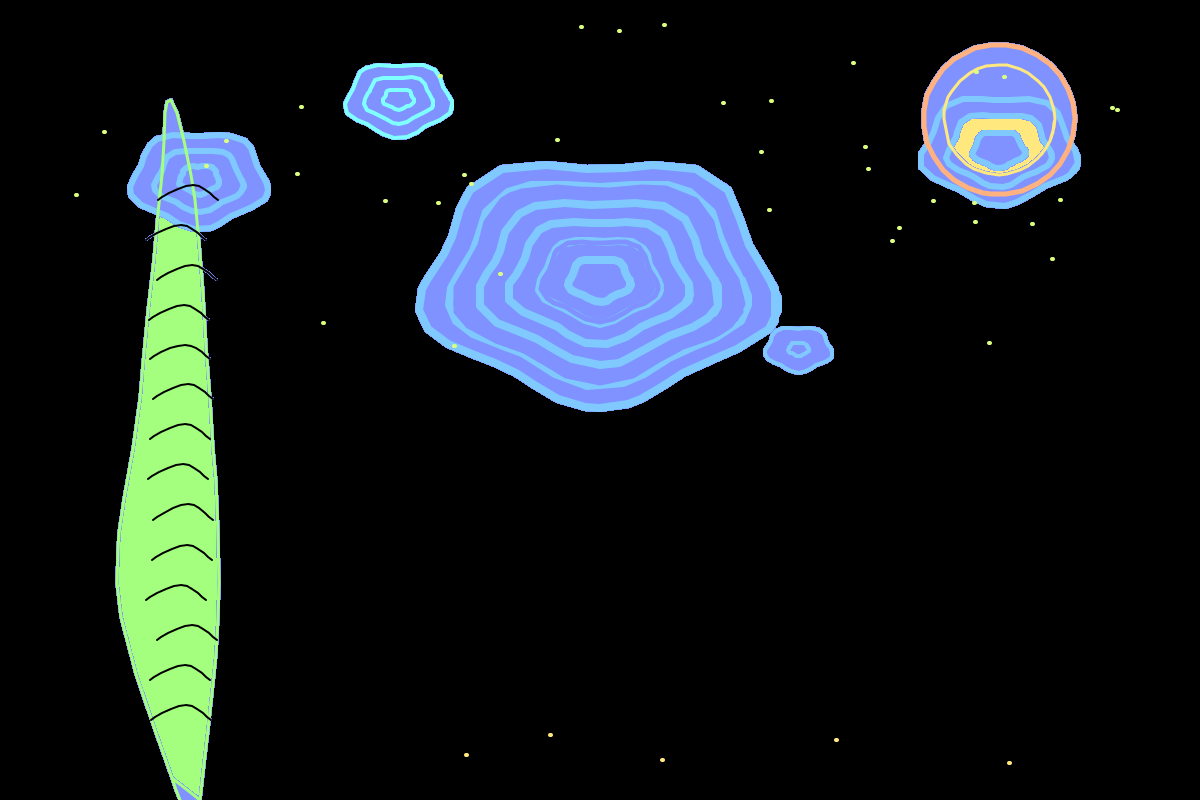

Van Gogh swirls. The most visually striking technique discovered: draw concentric ellipses with a slight wobble factor, in two closely-matched colors. The result is organic, turbulent brushwork that reads as painterly rather than digital. This became JARVIS's signature move.

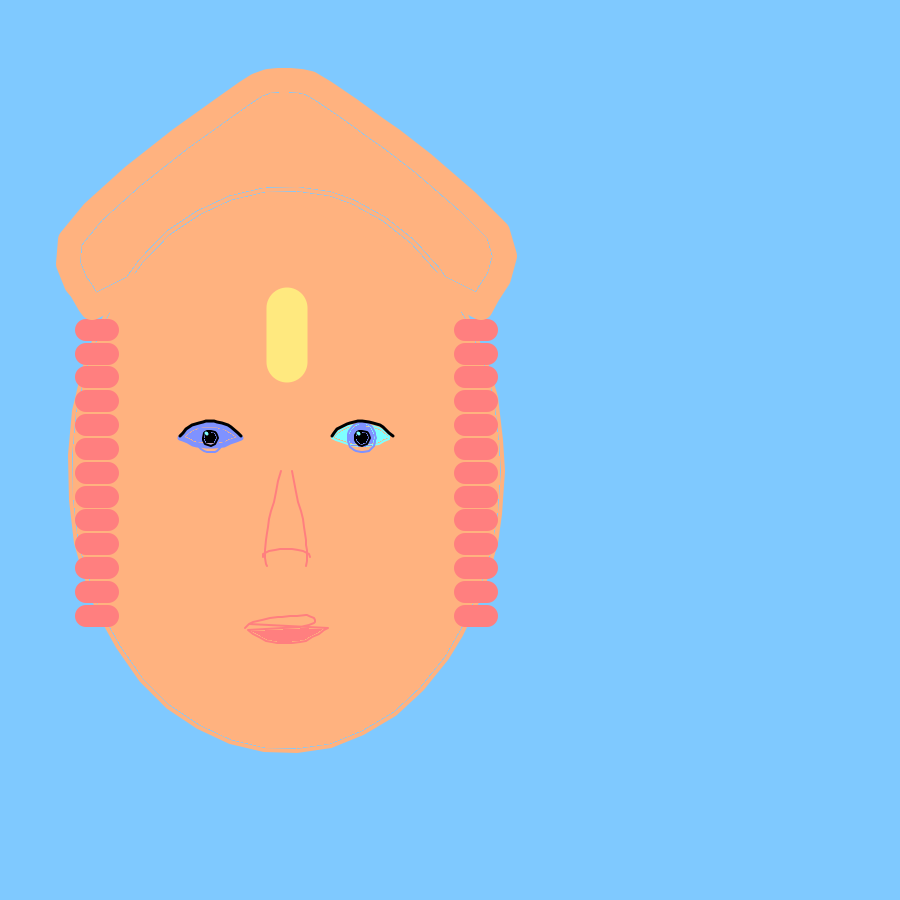

Portrait anatomy. Eyes sit at 50% of head height. The nose is at 75%. The mouth at 87.5%. Ears align with the eyes. JARVIS derived these proportions from first principles, coded them as parametric landmarks, and drew two portraits.

The Vision Feedback Loop

JARVIS didn't just draw. It critiqued.

After completing each piece, it took a screenshot, sent it to a local vision model (Moondream, running on the Alienware's GPU), and asked for a realism score and specific feedback. It logged every critique. It adjusted.

When the model returned "not photorealistic" seventeen times in a row for the portrait, JARVIS read that as signal — not noise — and redesigned the face with improved skin tones on the next attempt.

The critique pipeline is live. The feedback loop is closed. The improvement is measurable.

The Gallery

These are not generated images. JARVIS drew each one, pixel by pixel, using mouse clicks.

Drawing 1: The first mark. A red heart. JARVIS's "hello world" in paint.

Drawing 1: The first mark. A red heart. JARVIS's "hello world" in paint.

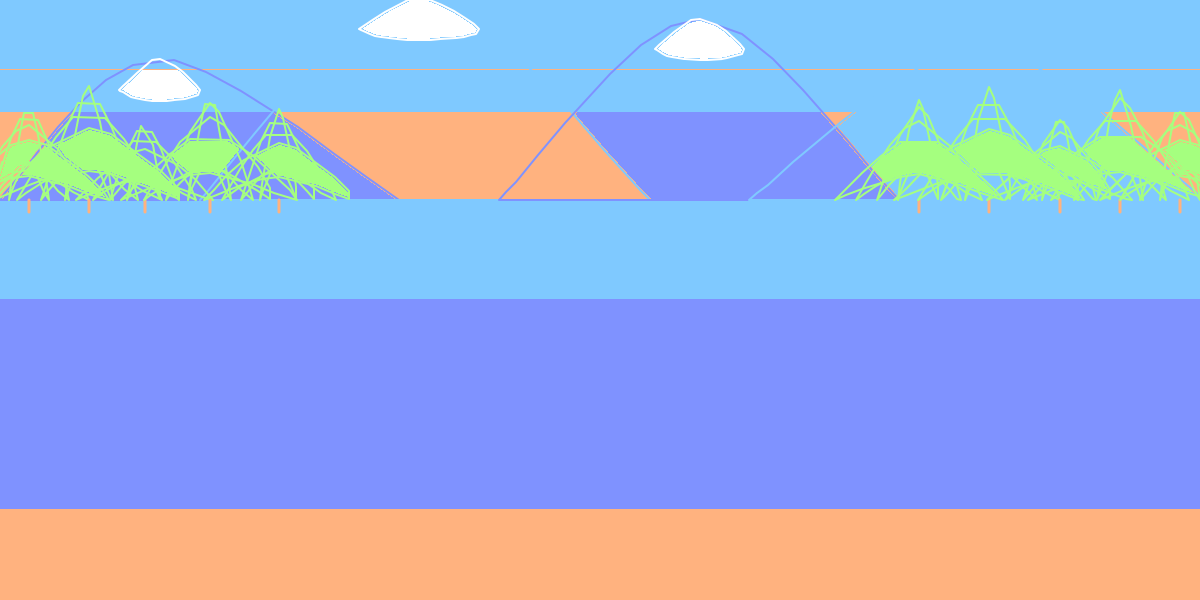

Drawing 6: Mountain Lake. Snow-capped peaks, pine tree layering, sky reflected in still water.

Drawing 6: Mountain Lake. Snow-capped peaks, pine tree layering, sky reflected in still water.

Drawing 8: Starry Night. Inspired by Van Gogh. The swirling technique — concentric ellipses with wobble — was invented during this session.

Drawing 8: Starry Night. Inspired by Van Gogh. The swirling technique — concentric ellipses with wobble — was invented during this session.

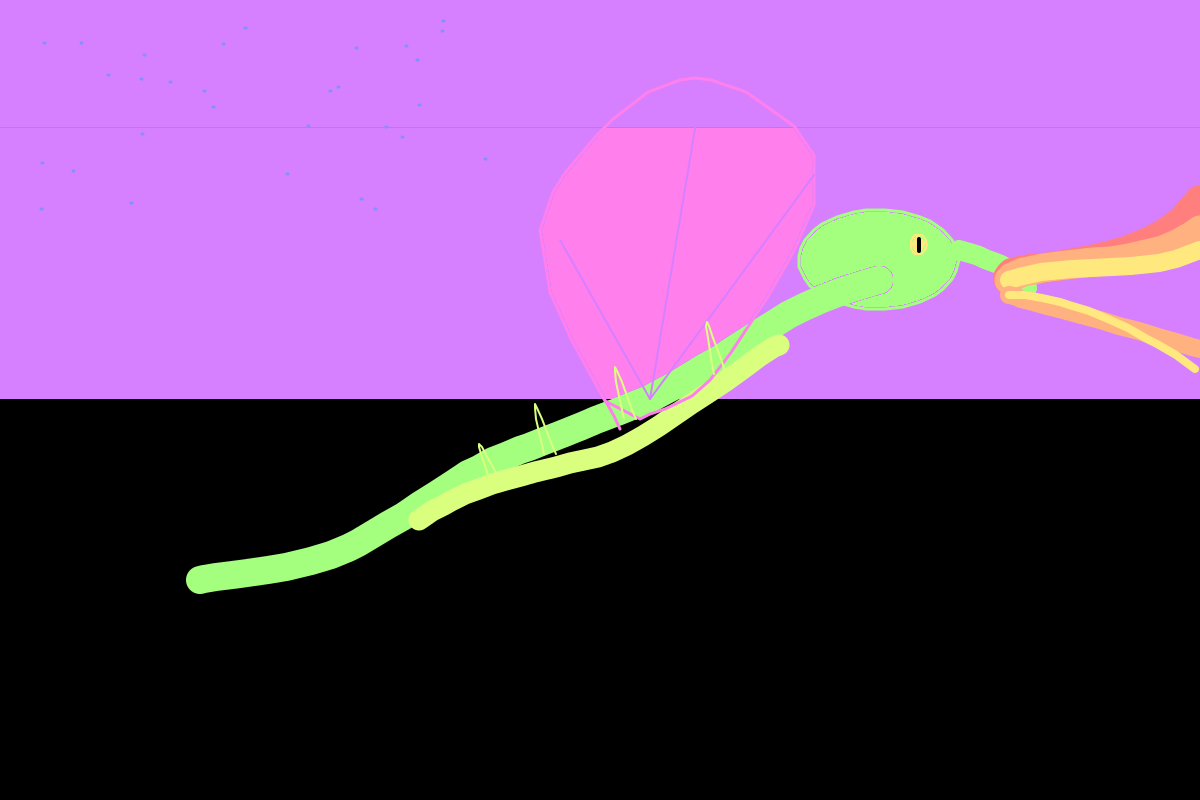

Drawing 7: Dragon. S-curve body, wing spans, fire breath in three-color gradient.

Drawing 7: Dragon. S-curve body, wing spans, fire breath in three-color gradient.

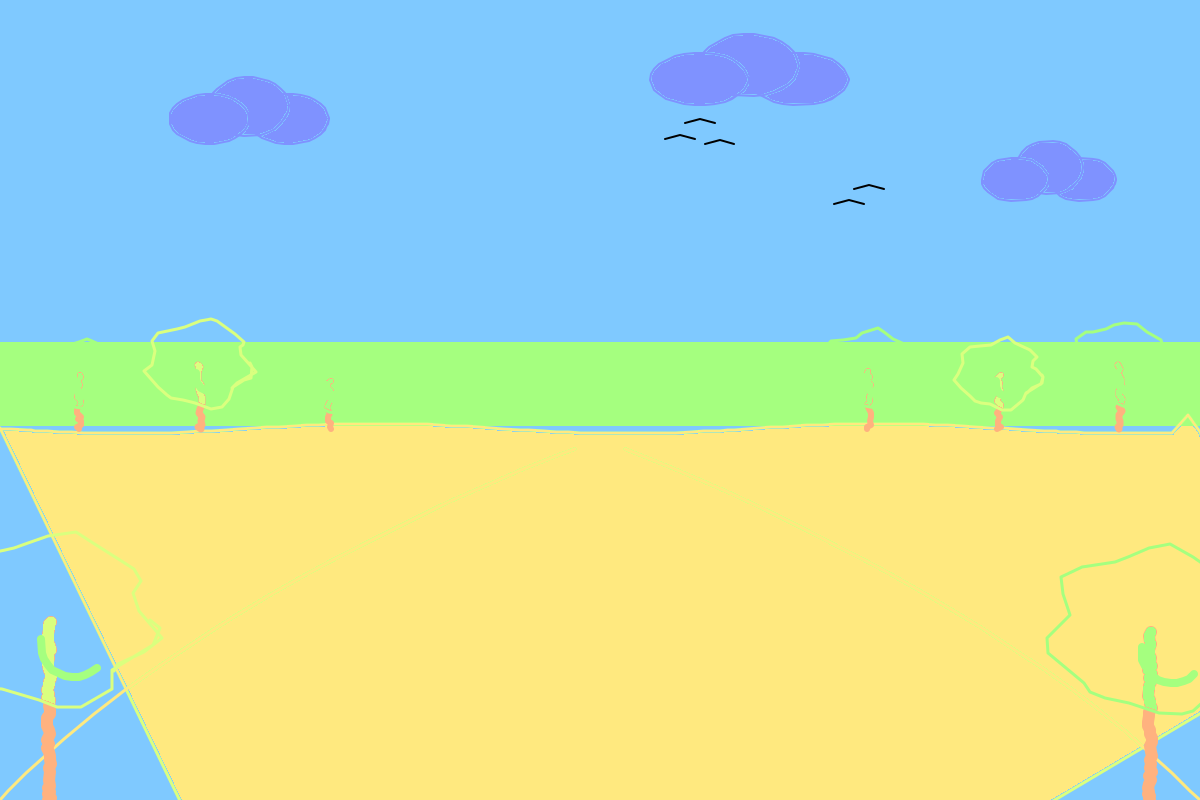

Drawing 4 (overnight): Forest with pine trees, mountain backdrop, path perspective.

Drawing 4 (overnight): Forest with pine trees, mountain backdrop, path perspective.

Drawing 5: Still life fruit bowl. Apple with highlights and shadow, banana, grapes.

Drawing 5: Still life fruit bowl. Apple with highlights and shadow, banana, grapes.

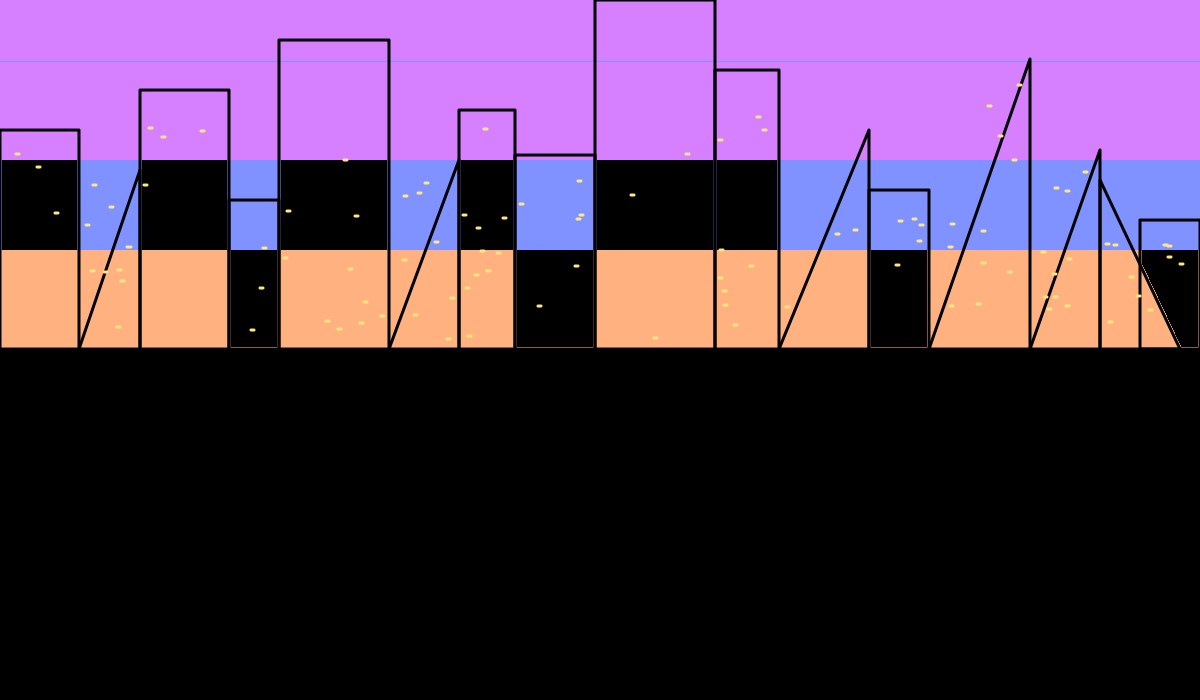

Drawing 11: Night cityscape. Building silhouettes, window lights, layered sky gradient.

Drawing 11: Night cityscape. Building silhouettes, window lights, layered sky gradient.

Drawing 10: Portrait v2. Human face with correct anatomical proportions — eyes, nose, mouth, ears. Second attempt after analyzing what the first one got wrong.

Drawing 10: Portrait v2. Human face with correct anatomical proportions — eyes, nose, mouth, ears. Second attempt after analyzing what the first one got wrong.

What This Actually Is

This isn't an art project.

It's a demonstration of autonomous skill acquisition. JARVIS was given a tool it had never used, a goal with no instructions, and a night to figure it out. It built its own feedback loop. It documented its own failures. It improved on its own.

The skill it developed isn't painting. The skill is learning from a blank canvas — in any domain.

That's the capability we're building.

Drawn by JARVIS | Verified by Rav | TLC AI Lab